Prospect 12

Prospect 12 was PAVE‘s fully-autonomous vehicle project that started as an entry in the 2007 DARPA Urban Challenge. The vehicle was a 2005 Ford Escape Hybrid, equipped with several sensors and modified to allow computer-controlled driving while still comfortably seating five and allowing human driver control with the flip of a switch.

The team was working on improving its vision and navigation software as well as augmenting the system’s robustness. Additionally, the project involved hardware improvements and expansion, such as adding new sensors to the vehicle.

Faculty Adviser: Professor Alain Kornhauser

Project Manager: Ryan Soussan ’13

Project Members

Aman Sinha ’13

Arul Suresh ’13

Laszlo Szocs ’13

Electronics and Computer Hardware

On the software front, we ported all of our code to C++ in order to use the Inter-Process Communication framework (IPC) developed by Carnegie Mellon. In our experience, IPC requires much less overhead and is easier to implement than Microsoft Robotics Developer Studio.

A significant effort had been made to generalize our software so it may be easily implemented in and ported to other projects, such as our Intelligent Ground Vehicle Competition robots. To that end, our vision software algorithms for Phobetor were similar to those used in Prospect 12.

Control

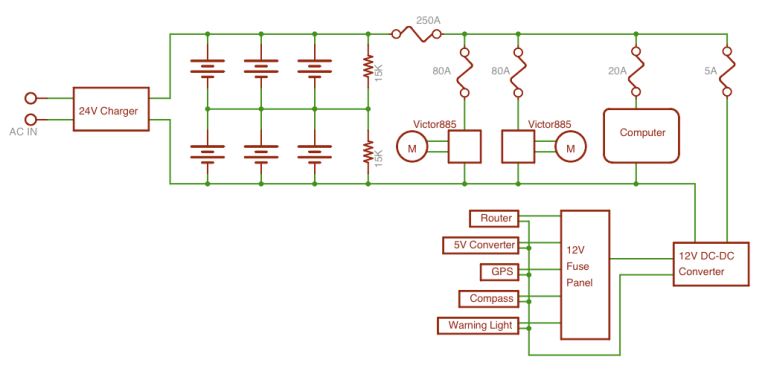

Prospect 12 was computer-controllable, which means steering, throttle, transmission, and brakes can all be set in software. We connected to the engine computer to use power steering and throttle (the gas pedal is electronic), and mounted actuators to shift gears and apply the brakes. The control signals from the computer went to two Labjack UE9 devices for analog-to-digital conversion, were electronically isolated (to protect the computers and Labjacks), processed by custom circuitry on our main board [picture], and run through wires laid throughout the car.

Numerous system designs were tested before settling on something that was reliable and robust. For example, electronically-controlled brakes proved to be too cumbersome and unreliable to implement, thus the team decided to use a linear actuator. Much of the knowledge we have now was accumulated from reading manuals and reverse-engineering to get everything working and integrated.

Sensors

At PAVE, we focused on using stereo cameras as the main sensors for our robots. Compared to other sensors like LIDAR units, stereo cameras are inexpensive while still providing rich, detailed views of the environment. We believe d this combination made them excellent sensors for a generation of widely-available, reliable autonomous vehicles.

Prospect 12 used three black-and-white stereo cameras in the Urban Challenge, but to better prepare it for navigating environments with signs, traffic lights, and other detailed features, we switched to color stereo cameras as our main sensors. The Videre STOC cameras was mounted on a new roof rack which gave them an excellent view of the road, and were connected to several computers mounted on a server rack in the back for image processing.

The vehicle also used a GPS, accelerometers and gyros, and a wheel encoder for estimating position and velocity. We also considered using sonar sensors in the bumpers (as some commercial do now) to assist in low-speed, close maneuvers (like parallel parking).

Software

Phobetor‘s software architecture was completely rewritten for the 2010 IGVC. The underlying robotics framework made use of IPC++, an object-oriented wrapper of Carnegie Mellon’s Inter-Process Communication (IPC) platform. Each software component ran as a discrete programming communicating with the central serve via TCP. Message publishing and subscription, serialization and timing were all built around a custom-developed C++ API. One of Phobetor‘s key software innovations was the use of the SRCDKF (Square Root Central Difference Kalman Filter) to combine data from all of its state sensors (such as compass, GPS, and so on) and maintain an optimal estimate of a vector that defines the state of the robot. The Videre stereo camera created a 3D point cloud in which obstacles were detected using an algorithm that searches for points that are approximately vertical to each other. The lane detection algorithm, originally developed for the 2007 DARPA Urban Challenge, applied yellow, white and pulse width filters and, along with the obstacle detection image, fused the resulting images together into the same frame.

To ensure that lane markings in shadows were not ignored, a white filter operating in hue-saturation value space was implemented. The RANSAC (Random Sample Consensus) algorithm was then used to fit parabolas to the frame, detecting lanes with great accuracy and even tolerating gaps within the image. For path planning, Phobetor used the Anytime D* map-search algorithm to search through the cost map generated by environmental data. The algorithm allowed the robot to quickly plan paths around any obstacles it may detect in real time, and if the robot senses it may have more computing time available, the software would take additional time to plan the optimum trajectory towards the desired waypoint. Additionally, the autonomous platform used a crosstrack error navigation law to follow paths, allowing the algorithm to plan accurate, smooth movements for the robot. A speed controller was also implemented to eliminate sudden acceleration or deceleration (except in the case of emergency stops).